How does semi-supervised learning work?

What is Semi-Supervised Learning?

Semi-supervised learning is a machine learning technique that leverages both labeled and unlabeled datasets to train a model. Typically, in real-world scenarios, labeled data is scarce or expensive to obtain because it requires human annotation, while unlabeled data is abundant and easily collected. Semi-supervised learning bridges the gap between supervised and unsupervised learning by using the small labeled dataset to infer labels for the larger unlabeled dataset and improve the model's performance.

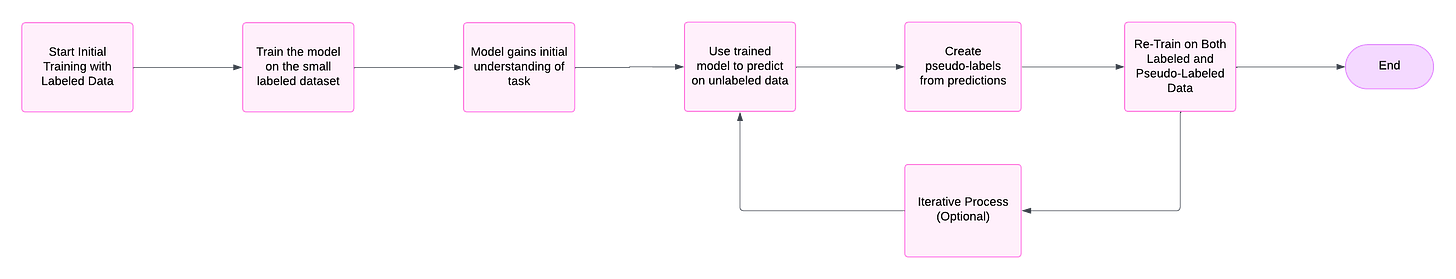

How Does Semi-Supervised Learning Work?

Initial Training with Labeled Data

The process begins by training a model on the small labeled dataset. This provides the model with an initial understanding of the task, such as learning relationships between features and labels.Generating Pseudo-Labels for Unlabeled Data

The trained model is then used to make predictions on the unlabeled dataset. These predictions are referred to as pseudo-labels—they are the model’s best guesses for the labels of the unlabeled data.Re-Training on Both Labeled and Pseudo-Labeled Data

The model is re-trained using a combination of the original labeled data and the pseudo-labeled data. This helps the model generalize better, as it effectively learns from a much larger dataset.Iterative Process (Optional)

In some cases, the process of generating pseudo-labels and re-training can be repeated iteratively to further refine the model’s accuracy.

Why Does Unlabeled Data Help in the Training Process?

The use of unlabeled data is valuable because even though the labels are missing, the features (patterns, relationships, or structures within the data) still contain useful information. By making use of this information, the model can better understand the underlying data distribution.

For example:

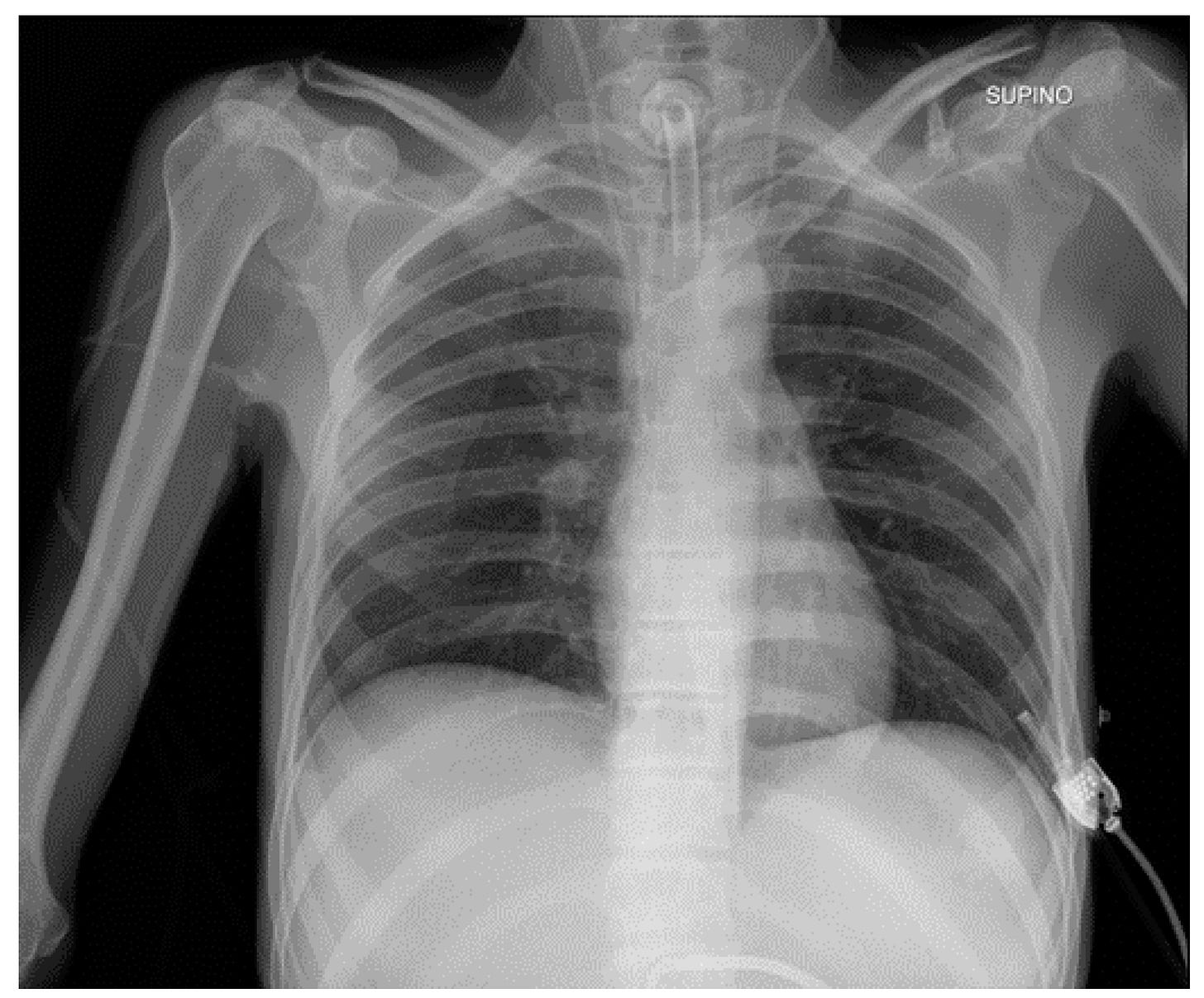

In image classification (e.g., identifying objects in medical scans), the features of similar images (such as pixel intensity or texture) naturally cluster together. A semi-supervised learning model can use the labeled examples to define these clusters, then assign pseudo-labels to the remaining data based on those patterns.

In text analysis, unlabeled documents can provide context for word usage or sentence structures, which helps the model improve.

Why Does Semi-Supervised Learning Work?

Semi-supervised learning works because of the assumption that similar instances share the same label. This is also referred to as the cluster assumption or manifold assumption:

Instances that are close together in the feature space are likely to have the same label.

For example, in image data, images with similar pixel patterns (e.g., two cat images) likely belong to the same class.

Challenges in Semi-Supervised Learning

Incorrect Pseudo-Labels

If the model assigns incorrect pseudo-labels to unlabeled data, it can propagate errors and reduce the overall performance.Imbalanced Data

If the labeled dataset is not representative of the entire distribution, the model may struggle to generalize to the unlabeled data.Choosing the Right Model and Algorithms

Not all algorithms are suitable for semi-supervised learning, and selecting the right one requires domain expertise.